AI, Credibility, and Culture: How Synthetic Media Rewired Public Trust in Mass Communication

Video created by Nevada Gray Studio using Google Veo 3. Example, Example: Even minor errors such as misspelled text in AI-generated video illustrate how automation, without human review, can unintentionally undermine credibility, reinforcing the need for thoughtful human oversight in trust-driven communication environments.

Introduction: Trust in a Synthetic Media Environment

Mass media once operated as a stabilizing cultural institution. That role has fractured in an era where AI-generated content, deepfakes, and algorithmic amplification influence public perception in real time. Deloitte’s 2025 Digital Media Trends report reveals a pattern of how audiences navigate media environments where authenticity is questioned, verification is expected, and trust is no longer automatically assigned to traditional institutions (Widener et al., 2025). Communicators now operate inside a system where synthetic narratives circulate as fast as verified information, redefining cultural expectations of credibility and social proof.

How Synthetic Media Reshaped Public Expectation of Truth

Advances in generative AI have changed society’s expectations about what media should provide, most notably transparency and verifiable authenticity. Pew Research Center 2025 identifies declining confidence in traditional news sources among younger generations who increasingly rely on creators, micro-influencers, and algorithmically surfaced content for information (Eddy & Shearer, 2025). Diaz Ruiz (2025) expands on this by showing how automated advertising and programmatic media inadvertently support misinformation when accountability structures remain without human oversight. Synthetic videos and AI-driven image manipulation intensify these dynamics by diminishing the ability to distinguish between real and fabricated narratives.

A widely cited example involves the proliferation of political deepfakes during the 2024 election cycle. The BBC examined several such incidents, including AI-generated robocalls attributed to public figures that never recorded those messages. Incidents like this recalibrate expectations around authenticity (Rehan, 2024). Audiences now assume any media asset can be altered, prompting greater skepticism and requiring communicators to incorporate verification and traceability into their workflows (Best, 2025).

Video created by Nevada Gray Studio using Google Veo 3. Example: Even minor errors such as misspelled text in AI-generated video illustrate how automation, without human review, can unintentionally undermine credibility, reinforcing the need for thoughtful human oversight in trust-driven communication environments.

Cultural Effects: A Public That Requires Proof Before Belief

Synthetic media reconditions culture by training audiences to question the legitimacy of everything encountered online, a shift intensified by what Maphosa (2024) identifies as growing concerns around autonomy, privacy, and trust in AI ecosystems. Pew Research (2025) shows that credibility increasingly migrates from institutions to individual creators who project transparency and relatability, while Diaz Ruiz (2025) demonstrates how automated ecosystems, or silos, elevate sensational or misleading content, turning misinformation into a cultural force through repeated exposure. These dynamics create an expectation for proof of authenticity, prompting users to look for content credentials, traceable sourcing, or watermarking before accepting digital material as genuine. This cultural reorientation produces a communication landscape defined by continuous authentication, influencing both the creation of media and the way audiences interpret it. These changes reflect a media environment where transparency functions as the primary currency of credibility, requiring communicators to prove authenticity through transparent identification, accountable data practices, and verifiable evidence at every stage of consumer touchpoints (Content Authenticity Initiative, n.d.).

Brené Brown’s TED talk on the BRAVING framework contextualizes trust as an intentional practice, highlighting the role of transparency, accountability, and integrity in restoring public confidence across contemporary mass communication systems. Reference: Uncommon Shapes. (2020, April 24). Anatomy of trust (abridged) [Video]. YouTube. https://www.youtube.com/watch?v=OqB5CEkPlI4

Trust as a Negotiated Cultural Practice in Building Trust-Centered Media Systems

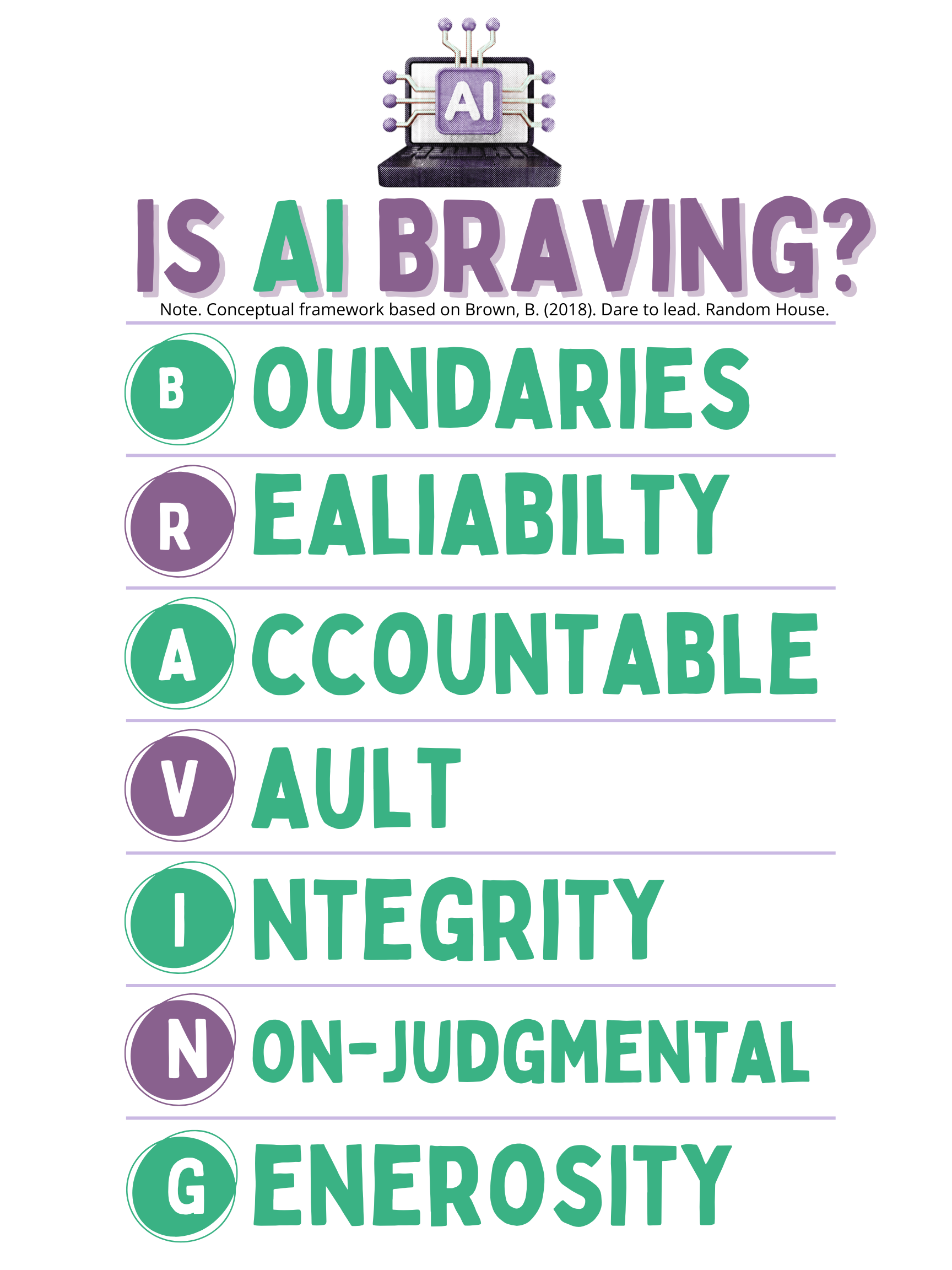

AI has shifted trust from a passive expectation to an intentional cultural behavior, a change supported by Deloitte (2025), which shows that recommendation-driven ecosystems amplify personal preference, reduce shared narratives, and elevate skepticism toward mainstream sources. Credibility is now evaluated through perceived transparency, relational closeness, and consistent communication cues across platforms, creating a landscape where trust must be actively earned. A trust-centered improvement plan requires AI content watermarking and provenance standards such as those outlined by the Content Authenticity Initiative (n.d.), platform-level transparency layers that reveal personalization inputs and sourcing as recommended by Diaz Ruiz (2025), and audience education modules that increase AI literacy as advocated by Maphosa (2024). Additionally, Cetinkaya & Krämer (2025), showed trust in AI systems is not universal, revealing two distinct user groups with opposing priorities in which some users favor transparency and certification as trust signals while others prioritize fairness and technical reliability, highlighting the challenge of designing AI systems that satisfy competing expectations for trust. Within marketing, business, and journalism, Brené Brown’s BRAVING framework offers a practical model for rebuilding public trust by operationalizing reliability, accountability, transparency, and integrity as core standards for mass communication in algorithm-driven media environments (Brown, n.d.).

Figure 1

BRAVING Framework for Trust in Algorithm-Driven Mass Communication

Note. This original graphic applies Brené Brown’s BRAVING framework to contemporary mass communication contexts in marketing, business, and journalism, illustrating how trust can be operationalized through reliability, accountability, transparency, and integrity within AI-mediated media systems. Conceptual framework based on Brown (n.d.).

Communication teams will therefore require training in rapid detection and response strategies to address synthetic misinformation with traceable evidence. These combined measures integrate ethical governance, transparency, and user education into a comprehensive framework designed to re-strengthen public confidence in mass communications systems.

References:

Best, J. (2025, August 19). Our communications have a credibility problem. Forbes Communications Council. https://www.forbes.com/councils/forbescommunicationscouncil/2025/08/19/our-communications-have-a-credibility-problem/

Brown, B. (n.d.). Resources. https://brenebrown.com/resources/

Brené Brown. (2015, November 1). SuperSoul Sessions: The anatomy of trust [Video]. BrenéBrown.com. https://brenebrown.com/videos/anatomy-trust-video/

Cetinkaya, N. E., & Krämer, N. (2025). Between transparency and trust: identifying key factors in AI system perception. Behaviour & Information Technology, 1–15. https://doi.org/10.1080/0144929X.2025.2533358

Content Authenticity Initiative. (n.d.). Content authenticity initiative. https://www.contentauthenticity.org/

Diaz Ruiz, C. A. (2025). Disinformation and fake news as externalities of digital advertising: a close reading of sociotechnical imaginaries in programmatic advertising. Journal of Marketing Management, 41(9/10), 807–829. https://doi.org/10.1080/0267257X.2024.2421860

Eddy, K., & Shearer, E. (2025, October 29). How Americans’ trust in information from news organizations and social media sites has changed over time. Pew Research Center. https://www.pewresearch.org/short-reads/2025/10/29/how-americans-trust-in-information-from-news-organizations-and-social-media-sites-has-changed-over-time/

Maphosa, V. (2024). The rise of artificial intelligence and emerging ethical and social concerns. AI, Computer Science and Robotics Technology Journal, Article 485. https://doi.org/10.5772/acrt.20240020

Rehan, M. (2024, February 16). How AI deepfakes threaten the 2024 elections. Journalist’s Resource. https://journalistsresource.org/home/how-ai-deepfakes-threaten-the-2024-elections/

Shanmugasundaram, M., & Tamilarasu, A. (2023). The impact of digital technology, social media, and artificial intelligence on cognitive functions: a review. Frontiers in Cognition, 1–11. https://doi.org/10.3389/fcogn.2023.1203077

Widener, C., Arbanas, J., Van Dyke, D., Arkenberg, C., Matheson, B., & Auxier, B. (2025). 2025 digital media trends: Social platforms are becoming a dominant force in media and entertainment. Deloitte Insights. https://www.deloitte.com/us/en/insights/industry/technology/digital-media-trends-consumption-habits-survey/2025.html

Nevada Gray Studio.